NVIDIA Repositions AI Data Centers as Active Grid Assets, Addressing Power Constraints

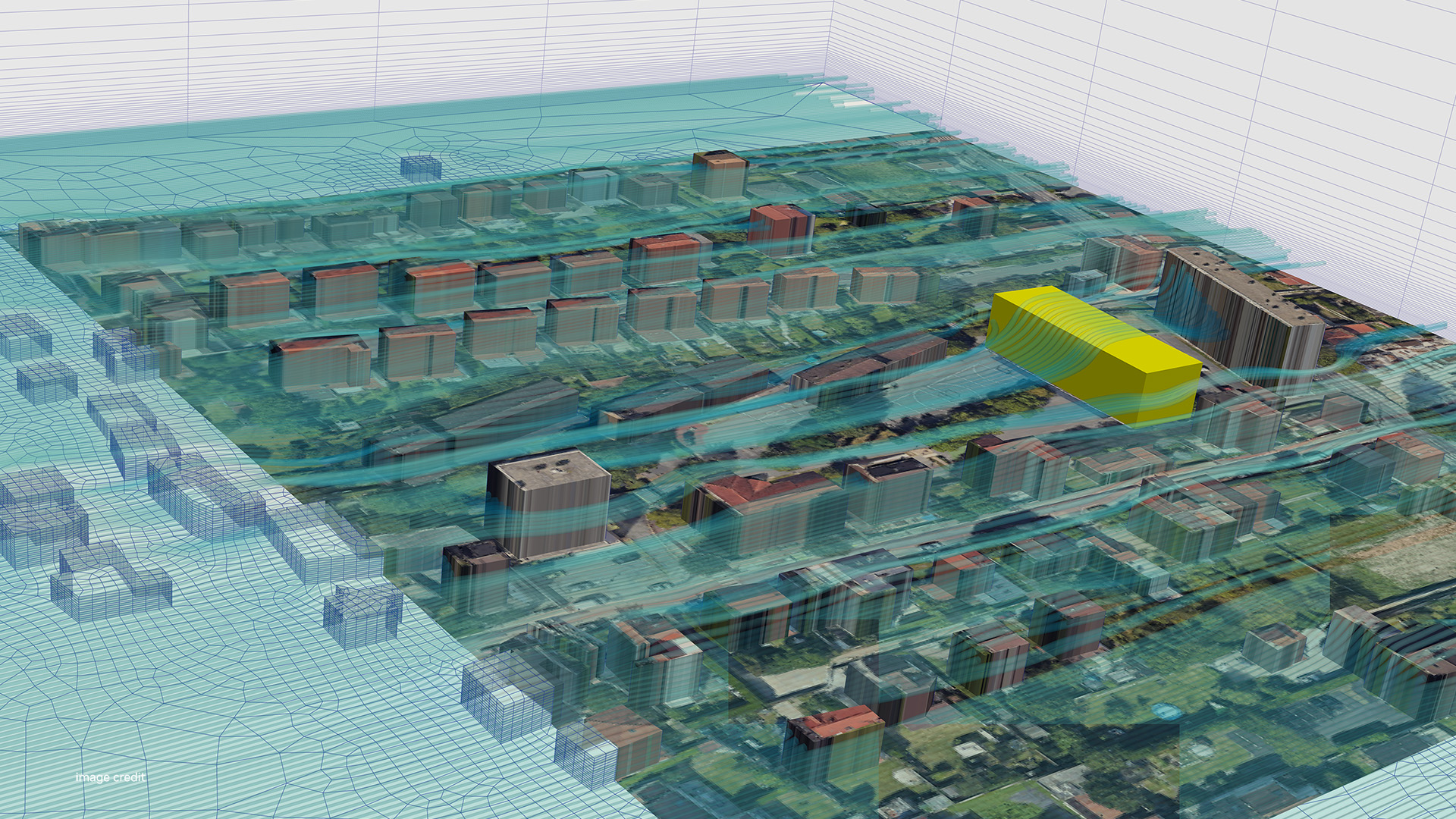

At CERAWeek, NVIDIA and Emerald AI revealed a framework for AI data centers to act as flexible grid assets, not just static loads. This move directly addresses the escalating crisis of AI's energy consumption, a critical bottleneck threatening the industry's scaling trajectory. As hyperscalers like Google and Meta openly flag power availability as a primary constraint, this initiative reframes the problem from pure efficiency to active grid participation, seeking to turn the industry’s biggest liability—its insatiable power demand—into a stabilizing force. The model works by dynamically scheduling high-intensity AI workloads to align with periods of low grid demand or surplus renewable energy. The clear winners are NVIDIA, whose hardware becomes more operationally viable at scale, and utility operators, who gain a powerful demand-response tool. This fundamentally alters datacenter economics, creating a disadvantage for legacy operators with inflexible power consumption. The move forces a strategic recalculation for rivals AMD and Intel, who now must build their own energy-aware ecosystems or cede the high-ground on sustainable AI infrastructure. In the next 6-12 months, expect a wave of pilot programs integrating this technology with regional grid operators. Within three years, such power flexibility could become a non-negotiable requirement for regulatory approval of new large-scale data centers. The critical variable is the software layer's ability to orchestrate this energy-compute arbitrage without compromising model training integrity. This trajectory suggests a future where AI compute is priced and allocated based on real-time grid conditions, a fundamental rewiring of cloud economics.