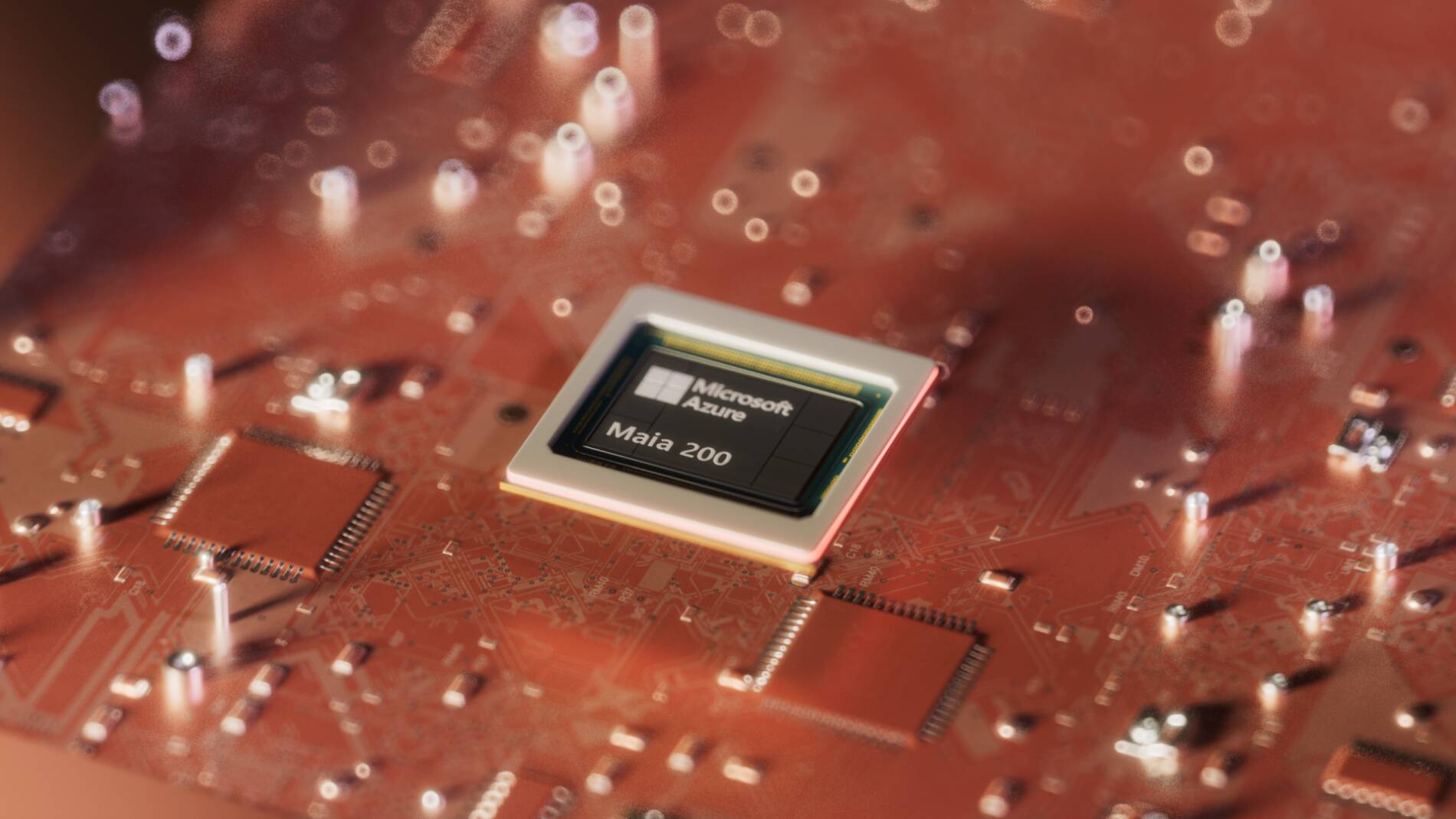

Maia 200: Microsoft Escalates AI Chip War, Pressuring Nvidia's Pricing Power

Microsoft has escalated its challenge to Nvidia's dominance by unveiling the Maia 200, an in-house AI accelerator designed to rival Blackwell-level performance. This move signals a critical strategic shift for Azure, aiming to reduce dependency on external suppliers and control its hardware stack. By optimizing for its own workloads, Microsoft is not just building a chip but architecting a vertically integrated AI ecosystem, a clear inflection point in the cloud infrastructure wars and the broader semiconductor market.

The introduction of Maia 200 immediately puts pressure on Nvidia's margins and market narrative, creating a credible alternative for Azure's massive AI workloads. This could reshape supply chain dynamics, forcing other cloud providers to accelerate their own silicon ambitions to remain competitive. The key question this raises is how Nvidia will adapt its pricing and product strategy for its largest customers, who are now also its direct competitors in silicon design, setting a new precedent.