AI Content Suspicions Trigger Publisher Censorship Precedent

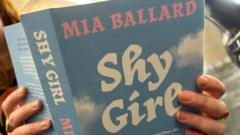

The cancellation of Mia Ballard's horror novel, "Shy Girl," by its publisher over unsubstantiated AI generation claims marks a critical escalation in the AI culture war. This moves the conflict from debates over training data, such as the ongoing New York Times vs. OpenAI lawsuit, to the realm of distribution and censorship. By de-platforming an author based on algorithmic suspicion rather than verifiable proof, the publisher establishes a chilling precedent that fundamentally alters market access for creators and prioritizes perceived reputational safety over authorial partnership, signaling a new era of risk for the entire creative sector. The publisher's decision appears driven by unreliable third-party AI detection tools and social media pressure, fundamentally altering the publisher-author trust model from partnership to adversarial verification. This creates clear losers: authors, particularly those with unique stylistic signatures that might be flagged as non-human, and independent presses now burdened with a new, ambiguous diligence requirement. The indirect winners are platforms like Amazon's Kindle Direct Publishing, which can absorb de-platformed authors, and ironically, the vendors of the flawed detection software driving these corporate reactions, whose market share grows with industry panic. This trajectory suggests a near-term chilling effect on stylistic innovation, as authors may subconsciously avoid prose that could trigger false positives from primitive detection algorithms. Within 12 months, expect the first author lawsuits challenging contract terminations based on these unreliable tools. The critical variable is not whether AI is used, but how publishers respond. Those adopting a rigid, prohibitionist stance based on flawed tech will cede the future to platforms that develop sophisticated systems for verifying and labeling various degrees of human-AI collaboration.